Author: Fernanda H. P. de Moraes, Kangjoo Lee, and Julia W. Y. Kam |

| The primary mission of the OHBM Women Faculty Special Interest Group (WF-SIG) is to help women principal investigators (PIs) connect and network with each other. Peer-to-peer networking is an effective, low-cost intervention that encourages female neuroscientists to collaborate in cross-disciplinary teams and overcome gender-related challenges (1). Our official in-person launch event at this year's annual OHBM meeting marks the first step towards connecting and empowering women-led research teams in the neuroimaging community. Logo Design: Jingyuan Chen, Arts Officer At the launch event, we unveiled the new logo that was crafted for the OHBM Women Faculty SIG by our Arts Officer, Dr. Jingyuan Chen (Assistant Professor, Martinos Center for Biomedical Imaging). |

Naomi Gaggi

Scientific writing workshop by Bradley Voytek, hosted by the SP-SIG

Communications Committee Lay Media team

Invitation to public events on brain imaging science (English and French language)

More details are provided below. You are invited to attend and to share this invitation with anyone who might be interested in this event.

Place: Grande Bibliothèque (475 Boul. de Maisonneuve E, Montréal, at Berri-UQAM metro station)

Date: Tuesday July 18, 2023

Time (talks in French): 6:00 pm (doors open at 5:30 pm)

Time (talks in English): 8:00 pm (doors open at 7:30 pm)

Tickets: free, but limited numbers available! Register quickly using the link below.

Register for French lectures: imagerieducerveau.eventbrite.ca

Register for English lectures: brainimaging.eventbrite.ca

Talks are aimed at the general public, and for each language session, there will be three talks of around 20 minutes, along with a Q&A session after.

Talks in English will be given by:

Dr. Alan Evans (A hitch-hiker's guide to mapping the brain)

Dr. Emily Coffey (Altering sleep and memory with sound)

Dr. Robert Zatorre (The neuroscience of music and why we love it)

Talks in French will be given by:

Dr. Sylvain Baillet (Tempête dans la boîte crânienne !)

Dr. Delphine Raucher-Chéné (Apports de la neuroimagerie en psychiatrie : en quoi étudier le cerveau nous aide à mieux comprendre les problèmes de santé mentale ?)

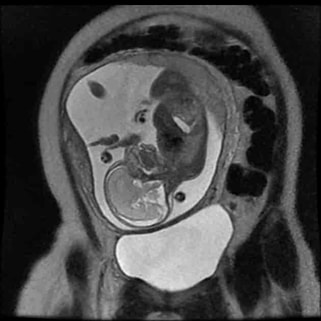

Dr. Anne Gallagher (Toute la lumière sur le développement du cerveau)

Le mardi 18 juillet, des neuroscientifiques d'universités québécoises présenteront les recherches liées au fascinant domaine de l'imagerie du cerveau. Les conférences seront données en anglais et en français, et sont gratuites pour le grand public.

Plus de détails sont fournis ci-dessous et dans les affiches ci-jointes. Vous êtes invités à y assister et à partager cette invitation avec toute personne susceptible d'être intéressée par cet événement.

Place : Grande Bibliothèque (475 Boul. de Maisonneuve E, Montréal, à la station de métro Berri-UQAM)

Date de l'événement : mardi 18 juillet 2023

Heure (conférences en français) : 18h00 (ouverture des portes à 17h30)

Heure (conférences en anglais) : 20h00 (ouverture des portes à 19h30)

Billets : gratuits, mais en nombre limité ! Enregistrez-vous rapidement en utilisant le site ci-dessous.

Billets pour les conférences en français : imagerieducerveau.eventbrite.ca

Billets pour les conférences en anglais : brainimaging.eventbrite.ca

Les conférences s'adressent au grand public et chaque session linguistique comprendra trois conférences d'environ 20 minutes, suivies d'une séance de questions-réponses.

Les conférences en français seront données par :

Dr. Sylvain Baillet ( Tempête dans la boîte crânienne ! )

Dre. Delphine Raucher-Chéné ( Apports de la neuroimagerie en psychiatrie : en quoi étudier le cerveau nous aide à mieux comprendre les problèmes de santé mentale ? )

Dre. Anne Gallagher ( Toute la lumière sur le développement du cerveau )

Les conférences en anglais seront données par :

Dr. Alan Evans ( A hitch-hiker's guide to mapping the brain )

Dre. Emily Coffey ( Altering sleep and memory with sound )

Dr. Robert Zatorre ( The neuroscience of music and why we love it )

OHBM's many committees and Special Interest Groups

Community-led events at #OHBM2023

Read on to learn about upcoming committee and SIG events at OHBM 2023!

And don't forget to check out our latest Neuroscience podcast episode, where Peter Bandettini and Alfie Wearn talk about these events! You can find it at your favorite podcast service here and on YouTube here.

Rahul Gaurav & Naomi L. Gaggi

Looking at the past and future of functional connectivity

Dr. Biswal is well-known for his seminal work in functional connectivity and continues his research in brain connectivity and signal processing using MRI. He is also a familiar guest on the NeuroSalience Podcast, having been featured in Season 3, Episode 5 in conversation with his former labmate Dr. Peter Bandettini.

In this interview, Rahul Gaurav and Naomi L. Gaggi talked with Dr. Biswal as a keynote speaker for the upcoming 2023 Organization for Human Brain Mapping Conference in Montreal, Canada. They cover his academic journey, his research, and the potential future of resting state functional magnetic resonance imaging.

Lavinia Uscătescu, with editing by Xinhui Li

Capturing the complex activity of the social brain

In her 2023 OHBM keynote address, she will highlight some of her recent results developing extensive neuroimaging and neurostimulation protocols in a population of autistic children. Using transcranial magnetic stimulation (TMS), she stimulated structures of the social brain (i.e. the parts of the brain responsible for processing information related to social interactions and cues) and followed this up with both neuroimaging and clinical assessments.

In this interview, Dr. Xujun Duan discusses how she overcame the challenges of implementing a research protocol that would adequately capture the complex activity of the social brain, as well as the rewarding moments she enjoyed during this process. Additionally, she offers career advice for early stage researchers who plan on pursuing an academic path.

Elisa Guma and Kevin Sitek

Using a variety of methods to map circuits in the primate brain

To address these questions, Dr. Minamimoto uses a range of methods including neuroimaging with functional MRI and PET as well as chemogenetic techniques such as Designer Receptors Activated by Designer Drugs (DREADDs), which are a class of proteins that allow scientists to control neural activity in awake, freely moving animals.

In this interview, Elisa Guma and Kevin Sitek talked with Dr. Minamimoto about his research program, the path he took to get there, and what we can expect from his 2023 Keynote address.

Read on to learn more!

Alfie Wearn and Faruk Gulban

Exploring quantitative MRI and 'in vivo histology'

Dr. Mezer’s lab is focused on mapping human brain structures during normal development and aging. In addition, it is focused on developing new approaches to characterize the structural changes associated with neurological disorders. Mezer’s main research tool is in vivo quantitative magnetic resonance imaging – qMRI. The Mezer lab is developing tools to biophysically explain the brain’s MRI signals at different levels and resolutions: from molecular local sources through cellular organization to the mapping of networks across the entire brain.

In this interview, we discuss the field of qMRI more broadly, touching upon the present and future interpretations of "in vivo histology." We also discuss Dr Mezer’s approach to mentorship, as well as the skills that would benefit future researchers in this field.

At OHBM 2023, Dr. Mezer will show us how combining multiple quantitative MRI measures can provide additional biological information about tissue composition and brain health.

You can find the video interview here and listen to the audio-only podcast version here (or on your podcast app of choice).

Johanna Bayer and LAVINIA CARMEN USCATESCU

Discussing deep brain stimulation and brain connectivity with Keynote presenter Andreas Horn

In this interview with Dr. Horn, we explore how deep brain stimulation can be used to better understand the human connectome, and how this work can be leveraged to improve patients’ lives. “In contrast to many other neuroimaging domains, there is a more or less direct translation [from Deep Brain Stimulation (DBS)] to clinical practice,” says Dr. Horn. For example, networks identified via DBS can be targeted with noninvasive stimulation methods such as multifocal transcranial direct current stimulation (tDCS) to improve conditions of patients with movement disorders like Parkinson's disease.

Dr. Horn also provides insight into ongoing discussions in the field on whether structural or functional measures provide better predictions for DBS outcomes. He explains why his lab has gradually shifted away from using patient-specific connectivity data to precise normative connectomes for determining which brain networks should be modulated for maximal effects.

In his keynote at OHBM 2023, Dr. Horn will give us a tour of his findings from years of work studying the effects of deep brain stimulation on the connectome across different disorders, ranging across neurological, neuropsychiatric, and psychiatric diseases. He will illustrate how his findings can be transferred across disorders to inform one another as well as how they can be further used to study neurocognitive effects and behaviors such as risk-taking and impulsivity.

You can find the video interview here and listen to the audio-only podcast version here (or on your podcast app of choice).

BLOG HOME

TUTORIALS

MEDIA

contributors

OHBM WEBSITE

Archives

January 2024

December 2023

November 2023

October 2023

September 2023

August 2023

July 2023

June 2023

May 2023

April 2023

March 2023

January 2023

December 2022

October 2022

September 2022

August 2022

July 2022

June 2022

May 2022

April 2022

March 2022

January 2022

December 2021

November 2021

October 2021

September 2021

August 2021

July 2021

June 2021

May 2021

April 2021

March 2021

February 2021

January 2021

December 2020

November 2020

October 2020

September 2020

June 2020

May 2020

April 2020

March 2020

February 2020

January 2020

December 2019

November 2019

October 2019

September 2019

August 2019

July 2019

June 2019

May 2019

April 2019

March 2019

February 2019

January 2019

December 2018

November 2018

October 2018

August 2018

July 2018

June 2018

May 2018

April 2018

March 2018

February 2018

January 2018

December 2017

November 2017

October 2017

September 2017

August 2017

July 2017

June 2017

May 2017

April 2017

March 2017

February 2017

January 2017

December 2016

November 2016

October 2016

September 2016

August 2016

July 2016

June 2016

May 2016

April 2016

RSS Feed

RSS Feed