|

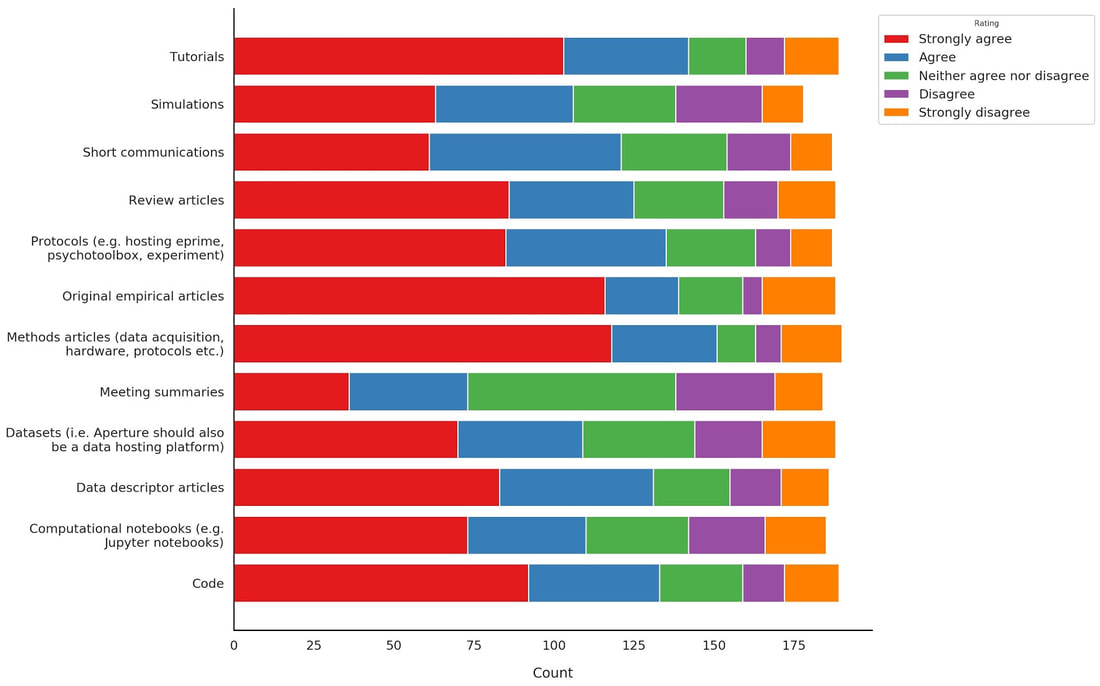

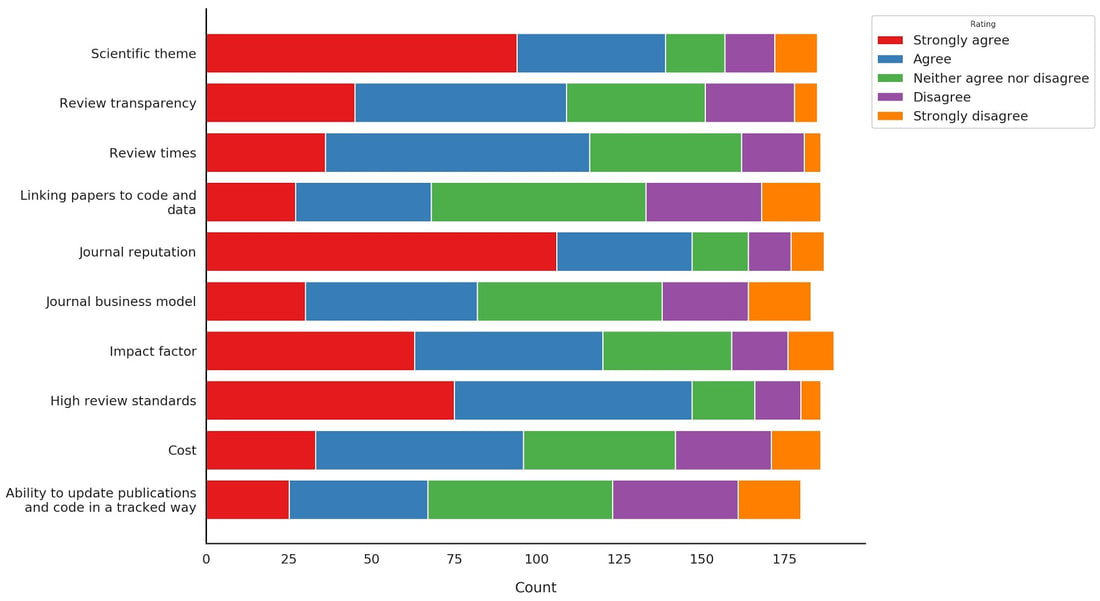

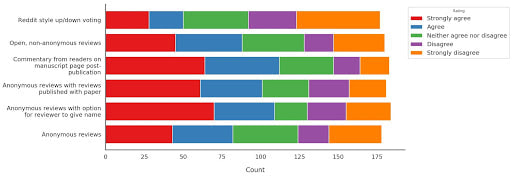

By: Elizabeth DuPre with the Aperture working groups The Aperture survey has closed, and we’re excited to share the results! Here, we summarize our initial conclusions and outline some next steps for moving the conversation forwards. If you’re interested in diving into the full dataset, anonymized responses are available here. Aperture is an OHBM initiative to develop a new publishing platform. Envisioned as an open platform to publish novel research objects, Aperture was created by TOPIC (The OHBM Publishing Initiative Committee) and received support from the OHBM Council in Winter of 2017. To better understand publishing needs within the OHBM community, we launched a survey in December 2018 to capture feedback on several dimensions of the publishing process. After advertising on the blog, social media, and the OHBM mailing list, we received nearly 200 responses. Here, we report on the results for three of the surveyed dimensions: publishable research objects, reviewing models, and paths to financial sustainability. If you are interested in examining these conclusions yourself or diving into other aspects of the data, be sure to check out our github repository. There, you can access the anonymized data and these initial analyses as well as an interactive environment to explore them in your web browser using Binder. In analyzing the survey results, our first concern was whether respondents wanted an official OHBM publishing platform. From this sample of the OHBM membership, the answer was a clear ‘Yes,’ with over 85% of respondents in favor of developing Aperture. A majority of respondents hoped that Aperture would publish cutting-edge research objects such as data descriptors and code in addition to traditional empirical papers. These results strongly support our initial vision and solidify our commitment to developing this new publishing platform. One key concern in developing Aperture is balancing publishing these and other innovative research objects while still honoring traditional publishing metrics such as Impact Factor. Indeed, respondents suggested that these metrics -- along with factors such as journal reputation and scientific theme -- significantly influence their decision of where to publish. High review standards were also a strong selling point for publishing in a particular journal; thus, which reviewing model(s) Aperture should adopt was one of the key dimensions for which we sought community feedback. Given that we could publish a range of research objects, the first consideration is what kinds of submissions should be reviewed. In order to ensure that all research objects are kept to the same standard, a majority of respondents indicated they would like to see all submitted research objects reviewed, including code and data. This would be an important departure from most major journals to date, and -- reflecting this novelty -- there was a spread in opinions for how code reviews should be conducted. A clear majority of respondents (68%) wanted code to be reviewed for accessibility and for reproducing reported results without error. A significant minority (34%), however, also hoped that code would be reviewed for its adherence to software development best practices. These represent significantly different levels of review, and we as a community will need to continue to learn from other efforts as we have this conversation moving forwards. Unsurprisingly, trainees were more likely than faculty and staff members to feel comfortable conducting code reviews, so we think the future looks bright for these efforts. We were also interested in how reviews should take place. That is, should reviews be open or closed, signed or unsigned? The figure below shows that no particular model was strongly favored, with majority of respondents preferring greater transparency in the content and authorship of the reviews. Post-publication reader commentaries also showed strong popularity, though there was less enthusiasm for an up- and down-style voting system. Keeping with the departure from a traditional journal, our survey suggests that OHBM members do not want to see Aperture’s content behind a paywall; instead, there was a clear desire for open access, with only 25% of respondents agreeing with a paywall even when paired with free journal subscriptions for OHBM members. You can see the full range of sustainability models surveyed, as well as the distributions of responses, in our interactive survey analysis. To fund an open-access model, respondents indicated they would like to see OHBM continue its financial support, especially for the first three years. If Aperture does become self-supporting during this time, a majority of respondents hoped that it would adopt a non-profit model rather than provide positive revenue to OHBM.

Respondents also expressed a willingness to support Aperture through their own submissions with a fee in the range of $1000 for publishing. This fee is significantly less than nearly all existing Open Access article processing charges; although we cannot commit to this pricing, we will do everything possible to keep costs at a minimum, aiming for sustainability at the lowest publication fee possible. In general, there was a preference for having some of these fees waived or reduced for OHBM members. In support of OHBM’s commitment to diversity and inclusion, there was also strong support to discount or eliminate fees for researchers from developing countries. Overall, there seems to be a clear enthusiasm for a new kind of publishing platform by and for the OHBM community, and we hope that Aperture will fill this role. The project is still actively investigating potential technical platforms, but we hope to announce a final selection by the Rome annual meeting. We would like to thank those OHBM members who took part in the survey, and we hope to start a dialogue with the entire community moving forward. We welcome questions or suggestions in the Comments section of this blog post, and we look forward to the conversation!

0 Comments

Your comment will be posted after it is approved.

Leave a Reply. |

BLOG HOME

Archives

January 2024

|

RSS Feed

RSS Feed