|

BY DAVID MEHLER In a recent blog post we learned about the activities of the OHBM Committee on Best Practices in Data Analysis and Sharing (COBIDAS), whose members work on establishing recommendations and tools to increase transparency and reproducibility in human neuroimaging. Together with other early career researchers I was fortunate to recently attend a workshop dedicated to Advanced Methods for Reproducible Science. There, a number of pioneers in reproducible science discussed the challenges of the field, and introduced ways to improve current practices. As part of this, Dr. Russell Poldrack discussed creating reproducible research pipelines for neuroimaging. Russ Poldrack is a professor of Psychology at Stanford University where he also heads the Stanford Centre for Reproducible Neuroscience. He presented a new exciting framework for reproducible neuroimaging called Brain Imaging Data Structure standard application (BIDS app). Russ agreed to an interview, providing an ideal opportunity to find out more about his views on the reproducibility crisis in science and get his recommendations for the field. Whenever you find a seemingly good result – one that fits your prediction – assume that it occurred due to an error in your code. - Russ Poldrack 1) David Mehler (DM): How would you describe the reproducibility crisis in psychology and neuroimaging to a (tax paying) member of the public? Russ Poldrack (RP): I would explain it like this: Some of the research practices that scientists have used in the last few decades have turned out to generate results that are less reliable than we thought they were. As we have come to recognize this, many researchers are trying to change how we do things so that our results are more reliable. This is the self-correcting nature of science; we are human and we make mistakes, but the hallmark of science is that we are constantly questioning ourselves and trying to figure out how to fix the problems and do better. An important part of the problem is that researchers are not currently incentivized by the system to do reproducible research; there is much more pressure to publish large numbers of papers in high-profile journals, which focus more on splashy findings, than there is to make sure that those findings are reproducible. 2) DM: The definition of direct and conceptual replications can be debatable and it is not always clear how close a replication must be to the original study to count as a direct replication attempt. In neuroimaging, best practice for each element of the processing pipeline might change over time and these changes can affect the final result. In your view, what constitutes a successful direct replication in neuroimaging? RP: It’s a challenging question. On the one hand, you would hope that the minor details don’t matter very much; if they do, then the result has limited generalizability and thus is probably not that important even if it’s true under those specific circumstances. On the other hand, we know from the work of Stephen Strother and his colleagues, and from the work of Josh Carp that processing choices can make a substantial difference. In my opinion, what’s most important is that a replication attempts to be as close as possible to the original study in its details, recognizing that this will never be fully possible. If a well-powered replication attempt of an important study fails, then it’s the responsibility of the field to determine whether the replication attempt reflects true lack of effect, differences in methodological details, or random fluctuations. It’s worth remembering that some number of well-powered replication attempts will always fail due to chance even when there is a true effect, and thus a single replication failure should not necessarily cause us to abandon the initial finding. 3) DM: Do current open science/data practices favor senior researchers, who already have tenure and high impact publications, over junior researchers, who often must put in the extra work? If so what can be done about it? RP: Yes, definitely. Doing reproducible science will almost certainly make it harder to succeed by today’s criteria of large numbers of publications in high-profile journals. Just as one example, I have become convinced that pre-registration of study design and analysis plans is critical to improving our science. However, doing a pre-registered study makes it more likely that one will come up with null effects, because there is no flexibility to tweak the analysis until a significant effect is found. I think there are a few ways to address the problem. First, established researchers need to lead by example; if we can’t engage in open and transparent research practices then there is no way that we can expect the younger generation to do so. Second, we need to pay more attention to open and transparent practices when we are judging job applicants, tenure cases, and grant proposals. This is much harder than simply counting up numbers of publications and impact factors, but it’s the only way that we can ensure that people doing solid research have a chance of making it on the job market, since they will always be outgunned by those who use shoddier practices to get papers in high-profile journals. One way to help with this was suggested by Lucina Uddin in a recent Tweet, where she described adding a section titled "Contributions to Open Science" to her CV; I could see this listing things like shared datasets, code, and pre-registrations. This would help signal that one is committed to open and reproducible science. 4) DM: This brings us back to the role of incentive structures. Together with other OHBM committee members you have recently initiated an OHBM Replication Award for the best neuroimaging replication study. What is your vision for a system that creates the “right” rewarding and incentive structures to promote data sharing and open science work? RP: Foremost, people need to get credit for their efforts. The rise of “data papers” has helped with this, since now a person can get citation credit for a shared dataset when it’s used by others. Registered Reports are another good move in this direction, as they ensure that one will get a publication for a well-designed study regardless of the outcome. As I mentioned earlier, we also need to work to make these practices more central to our hiring and tenure decisions; changing these kinds of processes is challenging, and requires more senior researchers to take the lead, which many of us are trying to do but it’s an ongoing effort. 5) DM: Thanks Russ. Finally, what is your main message for early career neuroscientists? What would you advise them to look for when choosing a lab and planning their career path? RP: First, focus on finding a scientific question that fascinates you. Science is full of long hours, intense criticism, and repeated disappointments, and only a burning scientific question will give you the continued motivation to persevere. Second, find a lab that shares your values. Talk to people in the lab and find out whether they have adopted the kinds of practices that would make you feel confident that your interest in openness and transparency will be supported and nurtured. Third, be open to change. It’s natural to make plans for the future, but often the world has different ideas for us, and it’s important to be able to take advantage of the best of whatever your situation has to offer you, even if it’s not what you initially planned for. Finally, realize that we are humans and we make mistakes, so that nothing you do will ever be perfect. One unfortunate consequence of the reproducibility crisis is that it seems to have led many trainees to worry that their work is never quite good enough, and that someone in the future will find a flaw or fail to replicate their work. This is a problem because if you don’t get the work written up, you will never get credit for having done it, regardless of how clever the experiment was.Science is a process for attaining knowledge, not an endpoint, and we need to keep that in mind. We should do the best we can to make our work transparent and reproducible, but also realize that at some point you just have to put the work out there for the world to see.  Figure 2: Attendees and speakers of the Advanced Methods for Reproducible Science workshop at Cumberland Lodge, Windsor. The workshop was organized by Dr. Dorothy Bishop (top row, 5th from the left), Dr. Chris Chambers (top row, 4th from the left) and Dr. Marcus Munafo, and funded by the Biotechnology and Biological Sciences Research Council (BBSRC) and the European College of Neuropsychopharmacology (ECNP).

0 Comments

Your comment will be posted after it is approved.

Leave a Reply. |

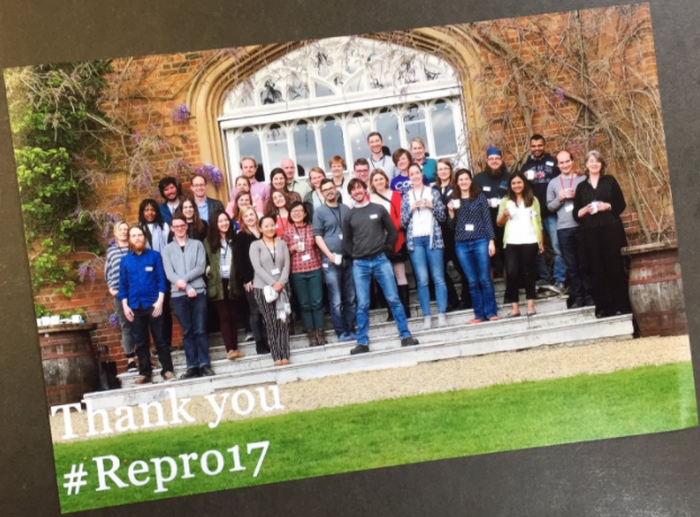

BLOG HOME

Archives

January 2024

|

RSS Feed

RSS Feed